As many of you might have already heard, Google has introduced a new cooperation program with four CDN providers: CloudFlare, Fastly, Highwinds and Level3. The Google Cloud Platform CDN Interconnect Program consists on giving CDN providers access to route their traffic through Google’s private high-speed links, so they can serve their customers through reliable, low-latency routes thanks to Google’s infrastructure.

After reading the news, the ProbeAPI team got curious to find out how much of a performance gain is there to expect using this service, if any at all. Taking advantage of the large number of probes available at ProbeAPI, we set up an experiment to put this interesting new infrastructure to the test.

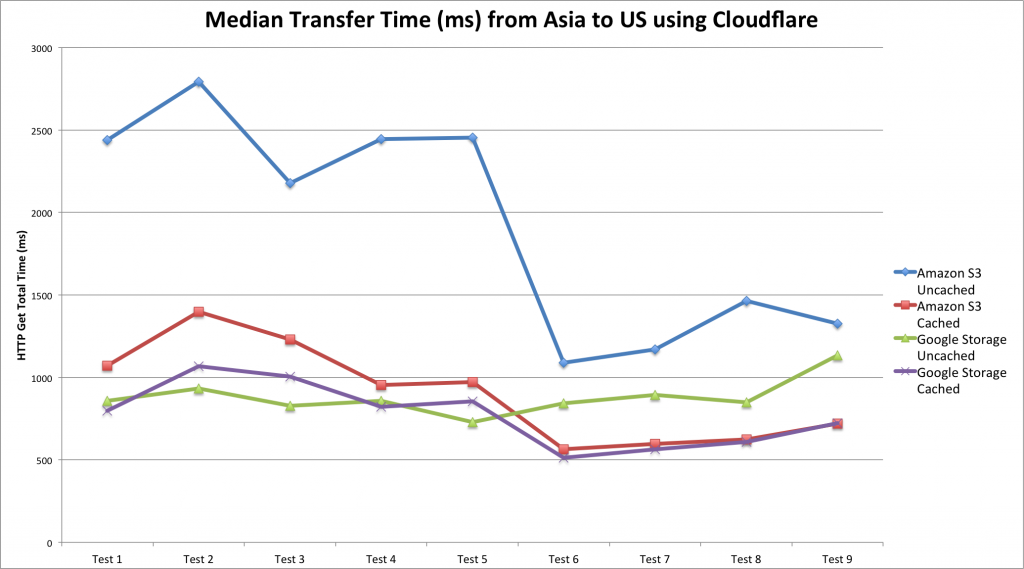

We used Amazon’s S3 and Google Storage as cloud storage providers and CloudFlare as CDN. To test similar routes, we chose the server in Singapore for our Amazon S3 bucket and the Asia server for the Google Storage bucket. We chose a maximum of 100 Probes in the USA as destination for our transfer tests.

After connecting and configuring the buckets to make them accessible through the CDN, we put the files to be tested in the buckets: several randomly generated 1.1MB PDF files, since PDF is one of the file extensions cached by CDNs.

Our objective is to measure the transfer times of those files and find out how long does it take to the CDN to cache those files for each storage provider. That means: we want to compare the transfer times of a cached file vs an uncached file from each bucket. We take the difference of those transfer times and we get the time it takes the CDN to cache a file, based on the delay caused by the caching and assuming that a previously cached file will transfer faster.

We test two files per bucket, let’s call them A and B. We make a pre-test running a Http Get test from ProbeAPI calling only the A file from both buckets. Running this pre-test made file A get cached in the US Servers of CloudFlare. Now we are in condition to run the real test. So we ran an Http Get test using ProbeAPI with files A and B on both buckets. So this is what happens: We cached file A with the pre-test, because of this, file A is expected to transfer faster than file B, which will have to be transferrred from Asia to the US CDN servers like file A did on the first test. Because this takes a bit extra time to do, we can calculate the overhead caused by the caching.

Results

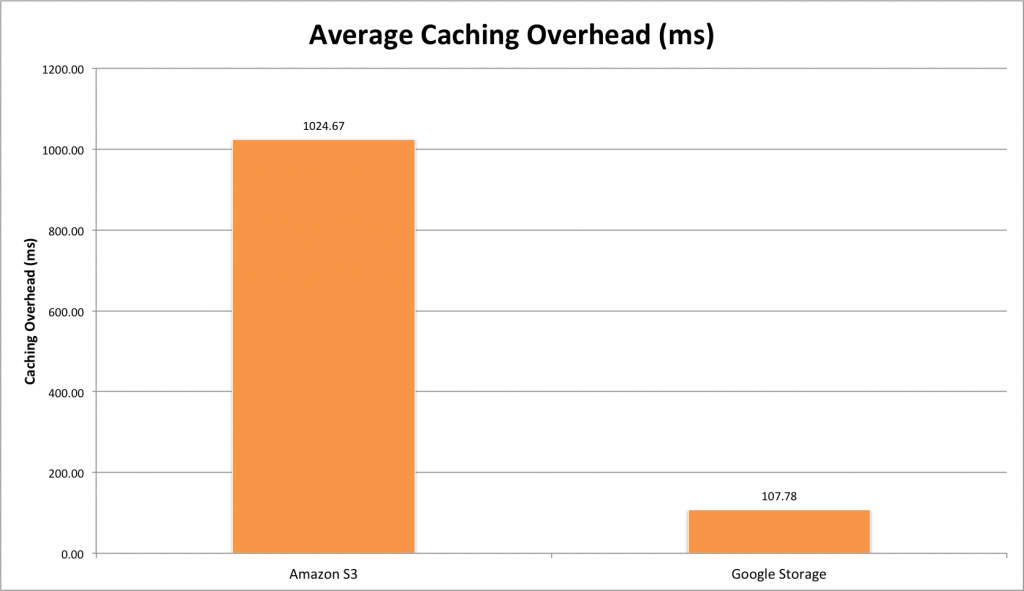

After just a few tests with ProbeAPI, the first thing that strikes you, is the amazing speedup in transferring files preloaded in the CDN cache, especially for Amazon servers. There was also a noticeable improvement in the uncached Amazon’s performance after the 5th Test. Either because of sudden changes in network conditions or some load balancing mechanism reduced the caching overhead enormously because of that route being used repeatedly by hundreds of probes across the US.

Now getting to the point. We can observe Amazon’s amazing speedup when the file is already cached, even surpassing uncached Google Storage performance after some tests, which is already fast by itself.

Google’s buckets performed well altogether and that’s where we can clearly see the power of this infrastructure. The overhead introduced by the caching process when using Google + CloudFlare is minimal compared to the one introduced from Amazon + CloudFlare. This is due to the evident performance upgrade brought by this new partnership with Google, with CloudFlare now being able to use Google’s infrastructure to transfer data from the Datacenter to the CDN in the blink of an eye.

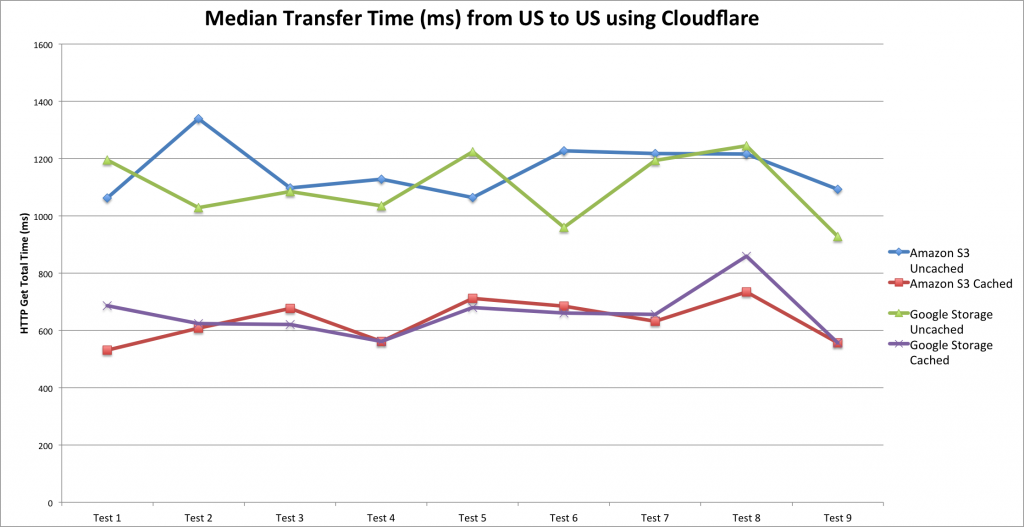

We decided to run the tests once more, using the same methodology but transferring files from US servers to probes located in the US as well. This is a very likely scenario, thus making this set of measurements very interesting.

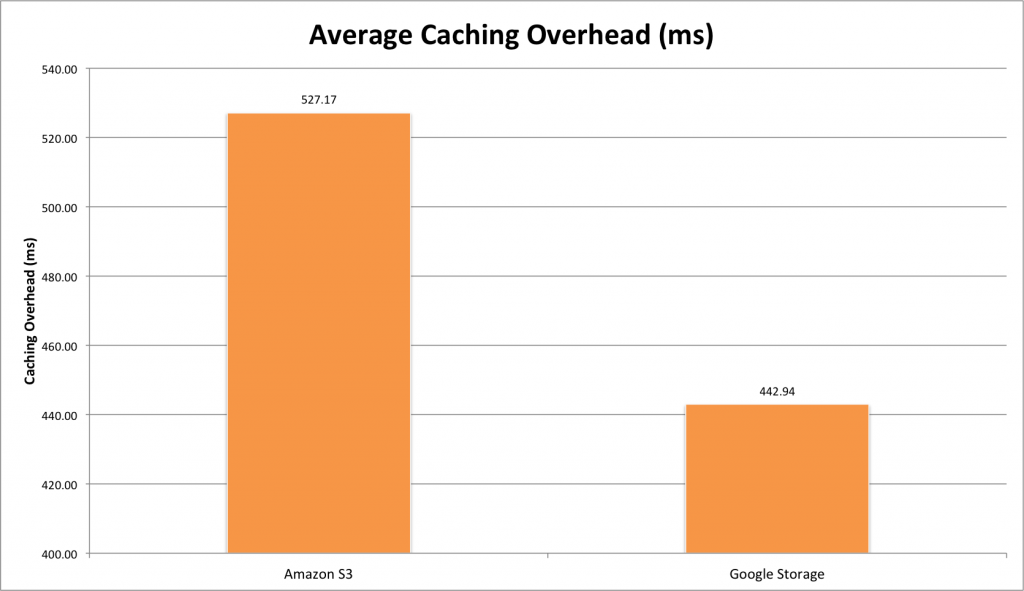

Here we can observe the expected scenario again: Uncached files take longer to deliver than files already cached in the CDN. In this case the difference is (also expectedly) less dramatic, due to the US-US traffic routing. The uncached files take similar amounts of time to load, although there’s still a noticeable overhead improvement when measuring transfers from Google’s buckets.

Here we can observe the expected scenario again: Uncached files take longer to deliver than files already cached in the CDN. In this case the difference is (also expectedly) less dramatic, due to the US-US traffic routing. The uncached files take similar amounts of time to load, although there’s still a noticeable overhead improvement when measuring transfers from Google’s buckets.

Even with your content being available locally in the US itself, the benefits of this CDN-Google partnership are still evident and relevant.

Even with your content being available locally in the US itself, the benefits of this CDN-Google partnership are still evident and relevant.

Analysis and Conclusion

We are living exciting times, with the Internet becoming ever faster and adopting more sophisticated connectivity year after year. This is one example of how the Net is adopting optimized structures. Some years ago we wouldn’t have dreamed of having our content available practically locally everywhere in the world, that’s one for CDNs.

Now with this cooperation, not only that is possible, but also your newest and updated content gets much faster to its destination and this is where the major beneficiaries of Google’s Interconnect platform lie, whose content is constantly changing, updating, adding new files and want them to be rapidly distributed for a virtually seamless availability all over the world.

Even with your content travelling shorter distances, like our US-US test showed, the benefits of serving customers faster and more reliably are still very noticeable and could be critical in certain scenarios: e.g. during a flash crowd, when your content (or part of it) becomes highly popular overnight. This would be a critical situation where you want to serve everybody without decreasing the quality of your service. The best part is that it works automatically and such a scenario that haunted administrators in the past, is becoming less and less fearsome thanks to CDNs and now even updated content is able to reach its destination with a short overhead.